News & Posts

Behavioral Research Software: The Visionary’s Guide to Multimodal Analysis in 2026

Future-Proof | Chapter #1

The most sophisticated research lab in the world is useless if its data streams live in isolation. You've likely felt the frustration of manual synchronization errors or the friction of scaling a study from a controlled lab to real-world spheres. It's exhausting to manage fragmented sensors when your goal is to understand the holistic human experience. Discover how to unify complex biophysical data into actionable human-centered insights using next-generation behavioral research software platforms that bridge the gap between technical precision and visionary discovery.

Experience a shift where unified equals easy. We'll explore how to build a hassle-free research ecosystem that offers real-time multimodal data visualization while staying ahead of the $30.62 billion market expansion projected by 2034. From navigating the high-risk requirements of the EU AI Act in August 2026 to mastering AI-powered analysis with Prophea.X, this guide prepares you to lead the next era of human-technology interaction. Enter a space where your data doesn't just exist; it empowers.

Table of Contents

Behavioral research software: A Unified Framework for 2026 and beyond

Key Takeaways

Transition from fragmented data silos to a unified research chain where multimodal biophysical streams exist in perfect synchronicity.

Master the use of AI-powered behavioral research software to automate complex coding and transform raw sensor data into deep human insights.

Evaluate the critical trade-offs between open-source friction and the absolute compatibility required for high-stakes industrial and academic spheres.

Discover how to architect a future-proof ecosystem by selecting the right synergy between mobile hardware like Dikablis glasses and advanced analysis platforms.

Experience the next era of human-centered analysis where you can dwell and connect multimodal data streams with zero technical friction.

The Evolution of Behavioral Research Software: From Silos to Synchronicity

Modern behavioral research software serves as the critical link between raw biophysical data and human insight. It’s the engine that transforms a stream of eye-tracking coordinates or heart rate fluctuations into a coherent narrative of human intent. For decades, researchers were tethered to manual video coding, a process that was both labor-intensive and prone to subjective bias. Today, we’ve moved into an era of real-time, multimodal data acquisition where the software doesn’t just record; it interprets.

This evolution is grounded in the science of Behavior informatics, which provides the foundational framework for turning complex interactions into actionable knowledge. As we enter 2026, the requirements for behavioral research software have shifted from simple recording to the creation of a unified research ecosystem. This shift is characterized by the concept of “spheres.” Instead of looking at a subject through a single lens, visionary researchers now capture the 360-degree environment. They analyze how a person moves through a physical space, how their gaze shifts across a dashboard, and how their physiological state reacts to environmental stressors simultaneously.

The “human-centered” evolution demands more than just a collection of data points. It requires a deep understanding of the “research chain,” where every piece of hardware and every line of code serves the ultimate goal of shaping a better future. We’re no longer just observers; we’re partners in the human-technology interaction. This level of insight is only possible when technical precision meets a visionary perspective on what data can actually achieve.

Breaking the Data Silo: Why Synchronization is Non-Negotiable

The hidden cost of millisecond-lag in your research is more than a technical annoyance; it’s a scientific liability. When eye-tracking data and EEG signals are even slightly out of alignment, the correlation between a visual stimulus and a neural response dissolves. Absolute compatibility across different sensor brands is the only way to prevent the fragmentation of results. You need a platform that treats every data stream as part of a single, organic whole. Multimodal synchronicity is the gold standard for 2026 research.

Academic Rigor Meets Industrial Agility

Ergoneers bridges the gap between the precision of university spin-offs and the speed of global industry leaders. Our heritage is deeply rooted in the Technical University of Munich (TUM), ensuring that every feature we develop stands up to the highest levels of scientific scrutiny. We don’t just ask you to trust our algorithms; we invite you to dwell and connect within our Publication Hub, where hundreds of peer-reviewed studies validate the accuracy and reliability of our platforms. This combination of academic heritage and industrial scale allows you to move seamlessly from a controlled lab environment to complex, real-world training simulators without losing a single frame of insight.

Core Capabilities of Advanced Behavioral Analysis Platforms

Experience the transition from simple data collection to true behavioral synthesis. Advanced behavioral research software in 2026 acts as a cognitive extension for the researcher. It doesn’t just store biophysical signals; it weaves them into a synchronized tapestry of human experience. By integrating Dikablis eye-tracking with EEG, EMG, and GSR, modern platforms provide a holistic view of the subject’s internal and external state. This level of multimodal integration ensures that you aren’t just looking at what a person does, but understanding the physiological “why” behind every action.

AI-powered coding represents the most significant leap in research efficiency. Traditional methods required hours of manual video review to identify specific behaviors. Now, deep-learning algorithms handle the heavy lifting. These systems recognize patterns across diverse psychological research methods, allowing scientists to focus on high-level interpretation rather than tedious data entry. Real-time visualization further empowers this process. You can see live heatmaps and physiological spikes as they happen, turning post-hoc analysis into an immediate discovery phase. For those building bespoke solutions, a robust API and SDK offer the flexibility to integrate custom sensors into the research chain with absolute compatibility.

Deep Learning and AI: Expanding the Research Spheres

AI now automates the identification of Areas of Interest (AOI) even in highly dynamic environments. Whether a subject is navigating a busy street or interacting with a complex cockpit, the software tracks and labels visual targets with hassle-free accuracy. This shift marks the transition from descriptive data to predictive behavioral modeling. We’re moving toward a future where behavioral research software anticipates human error or cognitive load before it manifests in a critical mistake. This predictive edge is essential for safety-critical industries like aerospace and medicine.

HMI and Simulator Integration: Testing the Future

Testing the next generation of technology requires a seamless bridge between the physical and digital. Modern software excels in synchronizing with automotive simulators and immersive VR/AR environments. Mobile eye-tracking systems are essential for validating these lab findings in real-world spheres where conditions are unpredictable. Whether you’re analyzing driver distraction or the nuances of human-robot interaction, the ability to maintain synchronicity across platforms is vital for reliable results. Explore how our laboratory consulting can help you architect these complex, integrated environments for your next breakthrough.

© unsplash

implementation-strategy

Implementation Strategy: Building a Multimodal Research Ecosystem

Enter the implementation phase by defining your research sphere. Whether you’re operating in a stationary lab, a mobile real-world environment, or a fully immersive VR setup, your choice of behavioral research software dictates the boundaries of your discovery. Selection begins with the synergy between mobile eye-tracking wearables like Dikablis glasses and Prophea.X. This pairing ensures that your hardware and software speak the same language from the first millisecond. You aren’t just buying tools; you’re architecting a gateway to human understanding that spans across academic and industrial applications.

Achieving absolute compatibility across biophysical inputs is the next hurdle. Most researchers struggle with the technical friction of aligning EEG, GSR, and eye-tracking streams. A visionary ecosystem eliminates this friction by design. As your study scales from a pilot project to a big-data behavioral analysis, your data management must remain robust and scalable. Unified systems prevent the data fragmentation that often halts progress in its tracks. You’ll find that having a single source of truth for all biophysical data makes the transition from raw numbers to actionable insights significantly faster and more reliable.

Designing the Human-Centered Lab

Discover how to set up a UX Lab through our dedicated guide. Designing a human-centered lab requires more than just high-end sensors; it requires a strategic layout that respects the research chain. Turnkey solutions allow for rapid scaling in corporate environments where time-to-market is critical. We provide the consulting expertise to ensure your lab is an empowering space for both the researcher and the subject. This professional scale ensures that every study you conduct is backed by a heritage of academic excellence and industrial reliability.

Advanced API Integration and Customization

Advanced API integration allows you to leverage eye-tracking data within your proprietary software environments. You can create custom behavioral coding schemes that evolve as your study matures. This flexibility ensures that your behavioral research software doesn’t just record history but actively shapes the future of your specific industry. By utilizing our SDKs, you can bridge the gap between standard research protocols and your unique technological requirements. This level of customization is what separates a standard lab from a visionary research hub.

Partner with us to build your custom research ecosystem today.

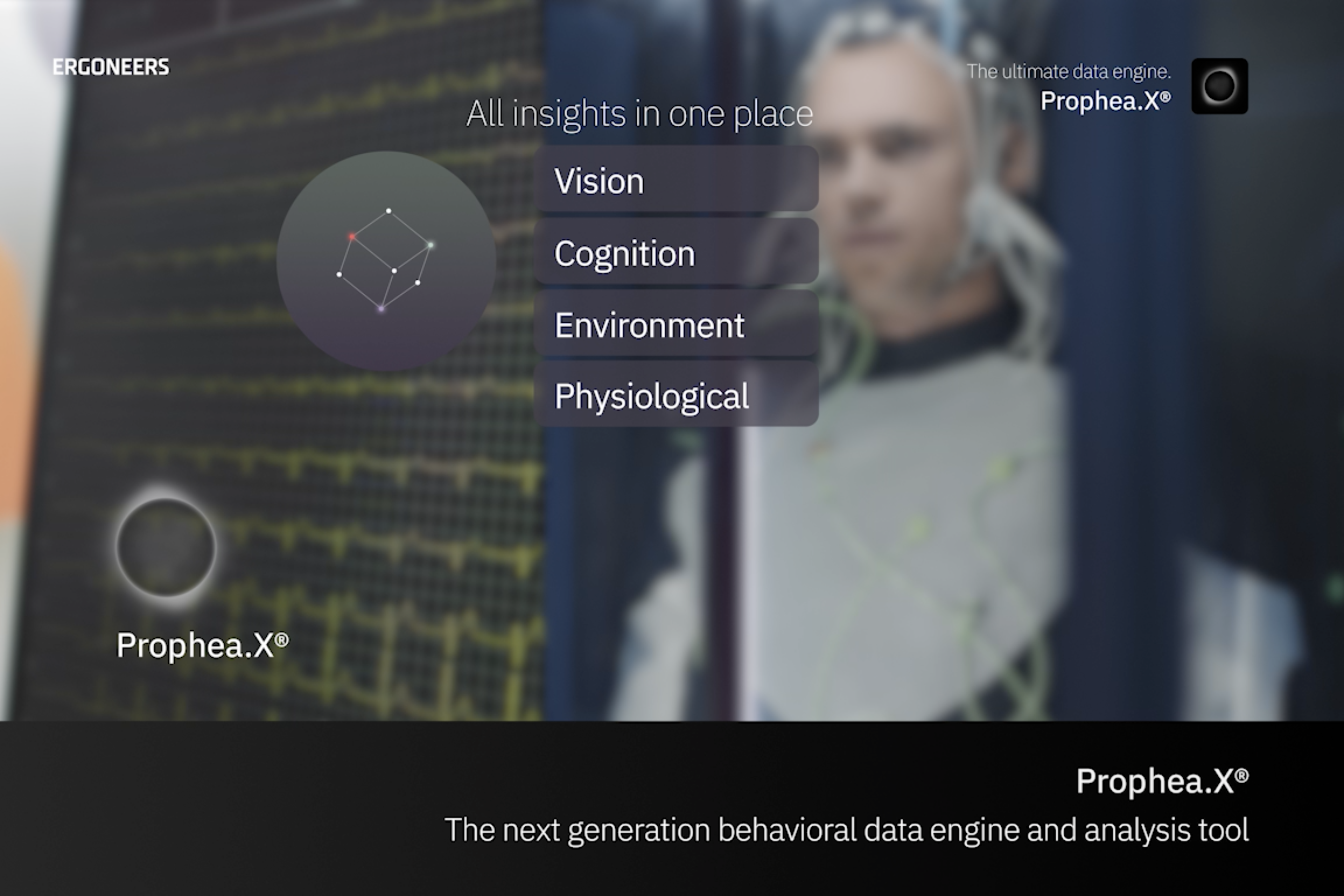

Prophea.X: Experience the Next Era of Behavioral Insight

Enter the pinnacle of the research chain. Prophea.X acts as the visionary expert in multimodal data analysis, bridging the gap between raw biophysical signals and profound human understanding. While previous sections detailed the necessity of synchronicity and the power of AI, this platform is where those elements converge into a single, intuitive experience. It’s designed for the researcher who refuses to be limited by technical friction. By allowing you to dwell and connect with your data, Prophea.X transforms the often chaotic process of analysis into a streamlined journey toward discovery.

Our human-centered ethos drives every feature within this behavioral research software. We believe that data shouldn’t just be collected; it should be used to shape a better future. Whether you’re optimizing the safety of autonomous vehicles or refining the ergonomics of industrial workstations, the goal remains the same: empowering the human at the center of the technology. Experience how our software expands your research spheres, providing the clarity needed to lead in an increasingly complex world.

Unified Data, Empowered Researchers

Prophea.X plays cross-industry, moving effortlessly from the high-stakes environment of automotive simulator training to the precise demands of sports science. This versatility is backed by the Ergoneers heritage, a 20 year legacy of innovation rooted in the Technical University of Munich. We’ve spent two decades refining the tools that industry leaders trust to deliver absolute compatibility and hassle-free accuracy. As the global behavior analytics market moves toward its projected $7.63 billion valuation by 2034, having a partner with this depth of academic rigor is essential. Experience the future of research-Discover Prophea.X and see how a unified ecosystem redefines what’s possible in your lab.

Beyond Software: Professional Training and Workshops

Maximizing the utility of advanced behavioral research software requires more than just a manual. We offer expert-led behavioral research workshops designed to move your team from basic operation to visionary analysis. These sessions cover everything from the nuances of eye-tracking integration to the implementation of complex behavioral coding schemes. For labs with highly specialized requirements, our custom integration services ensure that your proprietary hardware functions in perfect synchronicity with our analysis platforms. We don’t just provide the software; we provide the heritage of knowledge needed to navigate the next era of human-centered discovery.

Shaping the Next Era of Human Insight

The transition from fragmented data to absolute synchronicity is the defining shift of our time. By embracing the power of AI and multimodal integration, you move beyond mere observation into the realm of predictive behavioral modeling. We’ve explored how the right behavioral research software serves as the vital link between raw biophysical signals and the human-centered future we aim to build. Success in 2026 requires a platform that offers absolute compatibility with multimodal sensors while providing the reliability demanded by high-stakes automotive and aerospace environments.

Ergoneers stands as your partner in this evolution. Founded as a TUM spin-off with over 20 years of heritage, we provide the intellectual leadership and technical precision needed to expand your research spheres. Our commitment to hassle-free accuracy ensures that your focus remains on discovery rather than technical friction. Enter the next era of human-centered research, Explore Prophea.X and discover how a unified ecosystem empowers your vision. The future of human-technology interaction is yours to shape.

5) Propelling Research Forward with Ergoneers Prophea.X

Behavioral research software in the era of AI

The next era of research requires more than just hardware; it demands a seamless ecosystem that connects data capture, synchronization, and analysis. Experience this future with Prophea.X, the AI-powered platform designed to manage the complexity of modern behavioral studies. High-precision data from Dikablis eye-tracking systems provides the foundation, while Prophea.X delivers the insights. With a heritage of academic and industrial success documented in our Publication Hub, Ergoneers offers a partnership that extends beyond tools to include lab consulting and custom integration.

Prophea.X: Expanding Spheres of Insight

The “Unified equals easy” philosophy is the core of Prophea.X. It is one software platform for all your data, eliminating the silos that stall research. Its most powerful feature is AI-driven behavioral coding, which automates the tedious process of annotating events and behaviors, saving researchers hundreds of hours of manual work. Prophea.X allows you to visualize the entire research chain, from raw, synchronized sensor data to compelling, publication-ready metrics and reports, empowering you to discover deeper and more reliable insights.

Your Partner in Human-Centered Innovation

Building a state-of-the-art research lab is a complex undertaking. Ergoneers provides expert consulting services to help you design a bespoke behavioral research facility tailored to your specific goals. We offer comprehensive training and workshops to empower your team with the latest eye-tracking techniques and multimodal analysis methods. Take the next step in shaping a better, human-centered future. Join one of our free webinars or connect with our experts to start your journey.

Frequently Asked Questions

Prophea.X is the premier choice for researchers seeking absolute compatibility and real-time synchronicity across complex biophysical data streams on a felxible & infinitely scalable multi subject level. It bridges the gap between raw sensor data and human-centered insight by providing a unified environment for analysis. This platform is specifically engineered to handle the demands of the next era of human-technology interaction with visionary precision and academic rigor.

Yes, Prophea.X is designed for absolute compatibility with a wide array of third-party biophysical sensors including EEG, GSR, and EMG. Its flexible architecture ensures that these diverse inputs are unified into a single, reliable research chain with zero technical friction. This allows you to dwell and connect with your data instead of managing fragmented hardware drivers.

AI automates the identification of Areas of Interest (AOI) and reduces manual effort through deep-learning algorithms that recognize patterns in human movement and gaze. This behavioral research software capability transforms hours of tedious video review into immediate, actionable results. By shifting from descriptive to automated event coding and predictive modeling, AI empowers researchers to anticipate human error before it manifests in critical environments.

Yes, advanced platforms like Prophea.X integrate seamlessly with VR and AR environments to capture human-technology interaction within immersive spheres. This allows researchers to validate lab findings within complex digital simulations while maintaining perfect synchronicity between eye-tracking and environmental data. It’s an essential capability for testing HMIs in automotive or aerospace industries where real-world testing is high-risk.

D-Lab represents our first established heritage in multimodal data collection, while Prophea.X is our next-generation platform built for the AI-powered revolution. Prophea.X offers enhanced cross-industry flexibility and more advanced real-time visualization capabilities. While both maintain our commitment to scientific authority, Prophea.X is designed to expand your research spheres through deeper integration of deep-learning algorithms.

You ensure accuracy by utilizing a unified behavioral research software ecosystem that manages all biophysical inputs through a single, synchronized clock. Prophea.X eliminates the millisecond-lag often found in disjointed setups by providing a turnkey solution for sensor alignment. This level of synchronicity is vital for high-stakes industrial research where data integrity is the backbone of your results.

Yes, we provide comprehensive Behavioral Research Lab Consulting to help you architect a future-proof ecosystem from the ground up. We assist with hardware selection, sensor integration, and workflow optimization to ensure a hassle-free start for your team. Our experts bring over 20 years of heritage to every project, ensuring your lab meets the highest standards of academic and industrial rigor.

Yes, Dikablis glasses are designed for flexibility and can be integrated with other platforms through our robust eye-tracking APIs and SDKs. However, the highest level of technical synergy and hassle-free accuracy is achieved when they are paired with our native software solutions. This combination ensures that your hardware and software function in perfect synchronicity across all research spheres.